Science

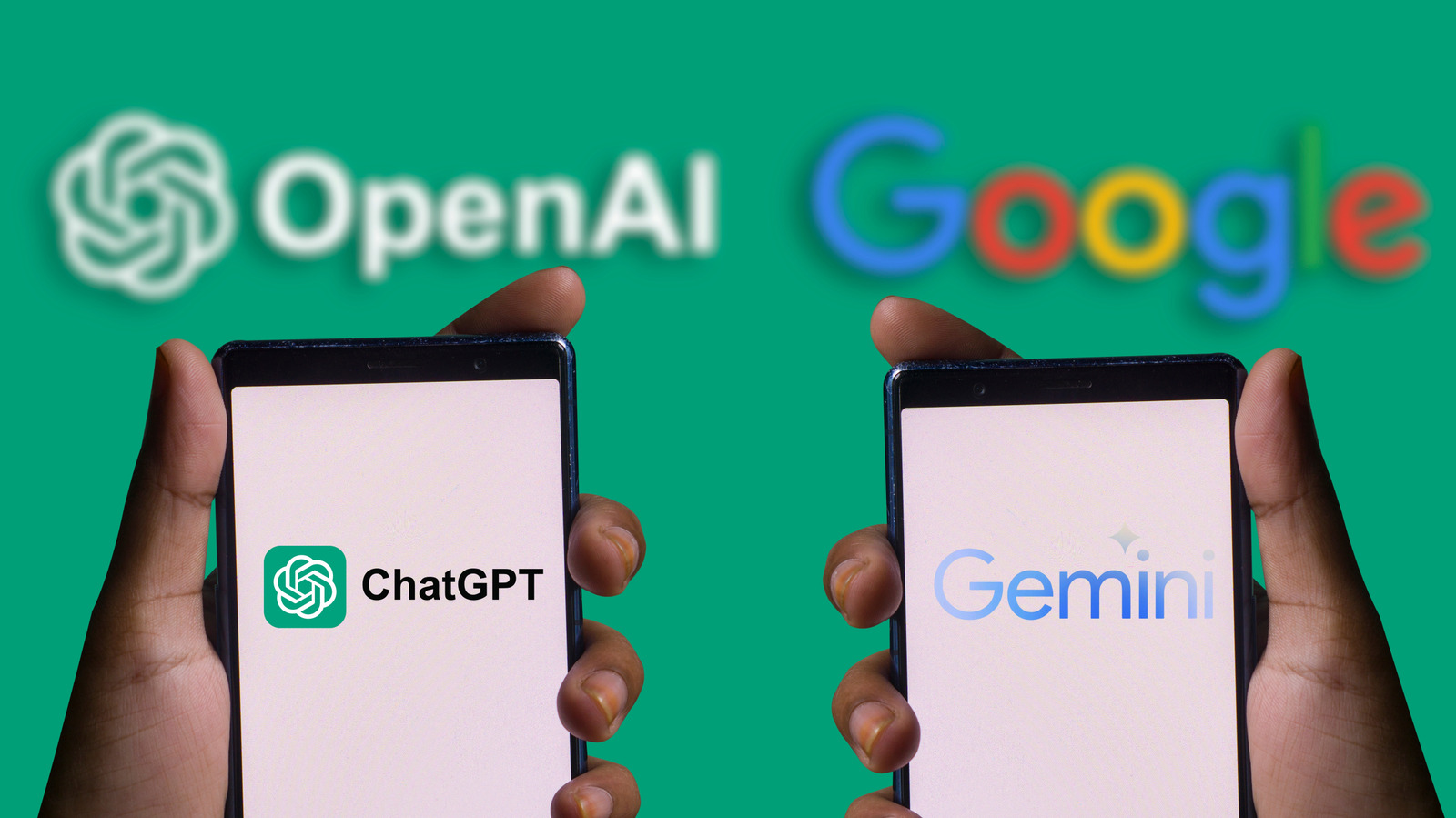

ChatGPT Outshines Gemini in Key AI Benchmarks

Recent evaluations highlight significant distinctions between two leading artificial intelligence systems, ChatGPT and Gemini. The competition in AI technology has intensified, with both systems exhibiting advanced capabilities. However, certain benchmarks indicate that ChatGPT currently outperforms Gemini in critical areas of reasoning, problem-solving, and abstract thinking.

Benchmark Performance: ChatGPT vs. Gemini

The comparison of ChatGPT and Gemini is complex, as both systems are continuously evolving. In December 2025, OpenAI released an updated version of ChatGPT, referred to as ChatGPT-5.2, which rapidly regained its competitive edge after concerns regarding its performance. Evaluating AI systems cannot rely solely on subjective preferences, as responses from large language models (LLMs) can vary significantly due to their stochastic nature. This means that the same prompt can yield different responses, complicating direct comparisons.

Instead, a more effective approach is to examine established benchmarks that assess AI capabilities. One such benchmark is the GPQA Diamond, designed to evaluate PhD-level reasoning in scientific disciplines. The test consists of complex questions that require comprehensive understanding and application of multiple scientific concepts. In this benchmark, ChatGPT-5.2 scored 92.4%, slightly ahead of Gemini 3 Pro, which scored 91.9%. For context, a PhD graduate would typically score around 65%, while non-expert individuals score approximately 34%.

Another significant area of assessment is software engineering, which is increasingly relevant for AI systems tasked with debugging and code resolution. The SWE-Bench Pro benchmark evaluates the ability of AI to tackle real-world coding challenges sourced from the GitHub platform. ChatGPT-5.2 resolved about 24% of the issues presented, while Gemini managed to resolve 18%. Although these figures may seem modest, they reflect the complexity of the tasks, with human engineers achieving a 100% success rate.

Abstract Reasoning and Visual Problem Solving

The capacity for abstract reasoning is another critical metric where ChatGPT demonstrates superiority. The ARC-AGI-2 test, launched in March 2025, assesses AI’s ability to identify patterns and apply them to new scenarios. ChatGPT-5.2 Pro achieved a score of 54.2%, while Gemini 3 Pro lagged significantly at 31.1%. The difficulty of this benchmark reflects its intention to measure human-like intelligence, an area where AI still faces substantial challenges.

Despite these results, it is essential to acknowledge that performance metrics in AI can fluctuate rapidly. As both OpenAI and Google advance their technologies, the rankings could change with subsequent releases. This analysis focuses on the latest versions of the systems, specifically ChatGPT-5.2 and Gemini 3, highlighting instances where ChatGPT has outperformed its competitor.

While there are benchmarks where Gemini excels, such as SWE-Bench Bash Only and Humanity’s Last Exam, the focus on three specific assessments provides a clearer picture of ChatGPT’s strengths in knowledge application, problem-solving, and reasoning.

As the AI landscape continues to evolve, ongoing evaluations and comparisons will be crucial. Users should remain informed about these developments, as both ChatGPT and Gemini strive to enhance their capabilities and redefine the boundaries of artificial intelligence.

-

World4 months ago

World4 months agoCoronation Street’s Shocking Murder Twist Reveals Family Secrets

-

Entertainment4 months ago

Entertainment4 months agoAndrew Pierce Confirms Departure from ITV’s Good Morning Britain

-

Health7 months ago

Health7 months agoKatie Price Faces New Health Concerns After Cancer Symptoms Resurface

-

Health2 months ago

Health2 months agoSue Radford Reveals Weight Loss Journey, Shedding 12–13 kg

-

Entertainment8 months ago

Entertainment8 months agoKate Garraway Sells £2 Million Home Amid Financial Struggles

-

Entertainment2 weeks ago

Entertainment2 weeks agoJordan Brook Faces Health Crisis in Hospital as Sophie Kasaei Stays Away

-

World5 months ago

World5 months agoEastEnders’ Nicola Mitchell Faces Unexpected Pregnancy Crisis

-

World4 months ago

World4 months agoBailey Announces Heartbreaking Split from Rebecca After Reunion

-

Entertainment7 months ago

Entertainment7 months agoAnn Ming Reflects on ITV’s ‘I Fought the Law’ Drama

-

Entertainment2 months ago

Entertainment2 months agoSelena Gomez’s Name Linked to Epstein: Examining the Claims

-

Health7 months ago

Health7 months agoTOWIE Stars Sophie Kasaei and Jordan Brook Pursue Fertility Treatment

-

Health7 months ago

Health7 months agoFiona Phillips’ Husband Shares Heartbreaking Update on Her Health